Azure Arc, have you tried it out already?

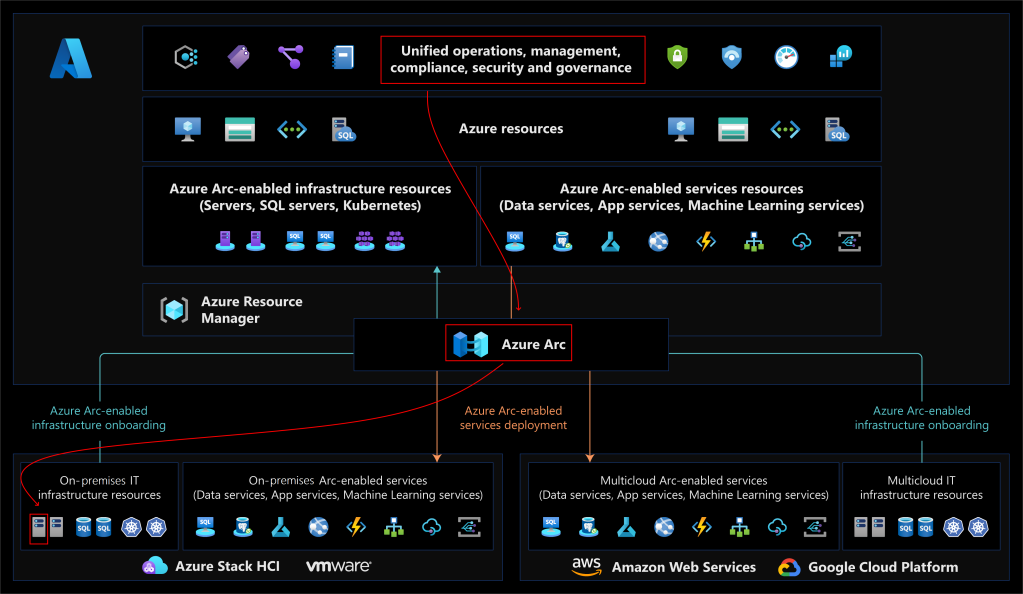

The advertisement of Azure Arc is great:

Azure Arc enables you to extend Azure management capabilities to hybrid environments, including on-premises datacenters and third-party cloud providers. You can use Azure Arc to manage and configure your Windows and Linux server machines and Kubernetes clusters that are hosted outside of Azure. You can also use Azure Arc to introduce Azure data services to hybrid environments.

So, we can manage server machines, Kubernetes, and data services, all over the world as if these live in Azure:

Remote server management, this is a very interesting feature from an IoT point of view because an IoT Developer wants to have control over all edge devices without having to manage them only by visiting them.

Zero-touch maintenance, could that be done using Azure Arc?

Today, we will look at a small but very important part of Azure Arc, Azure Arc-enabled servers, so we can answer that question.

Let’s see how easy it is.

Doorgaan met het lezen van “Getting started with Azure Arc-enabled servers”